To make a longish story short, I’ve recently had a chance to demo a the Oculus Rift and Samsung Gear VR head-mounted virtual reality (VR) displays with advanced video and game demos that hint at the huge entertainment potential of these devices. Though these kinds of personal VR systems have a long way to go in terms of both applications and functionality before becoming household tech, I was impressed by how well the video component works at this point.

What pulled me out of the experience with the more immersive demos was the static 2-channel audio (and, less so, feeling the cables – including the headphone cable – in the way when moving around). It seems this is a common opinion – the Oculus team, for instance, is working hard on a better audio solution, having recently licensed a technology called RealSpace 3D for integration with the latest Rift prototypes.

What follows is something of a departure from our regularly-scheduled programming – a short introduction to headphone-based 3D audio and to two companies working to make it happen with existing VR technology.

3D (headphone) audio basics

Any kind of competent solution for immersive 3D audio with headphones has to include at least two components.

The first is delivering realistic audio positioning through a stereo headphone of the type VR headset users are likely to be wearing. Anyone who has heard a good binaural recording, such as the well-known Virtual Barber Shop, knows that this is very much possible.

The second is a sound processing algorithm that can adjust the audio in response to the positioning of user’s head. To maintain audio immersion, turning your head to the right should make a sound source previously in front of you sound like it is now on your left – anything else breaks the VR illusion very quickly. For this there needs to be a way to track the position of the user’s head, such as the built-in motion sensor of the Oculus Rift VR headset, and also HRTF integration for how sound changes as it wraps around the head.

There are many additional considerations for VR audio, such as sound reflections and echoes, acoustic shadows of other objects in the virtual environment, the Doppler Effect for moving audio sources, and probably dozens more, but simultaneously solving the two conditions above is already a huge step in the right direction.

At CES 2015 I visited two different exhibitors attempting to demonstrate just that, each taking a slightly different approach on the road to immersive 3D audio.

Aside: how binaural sound works

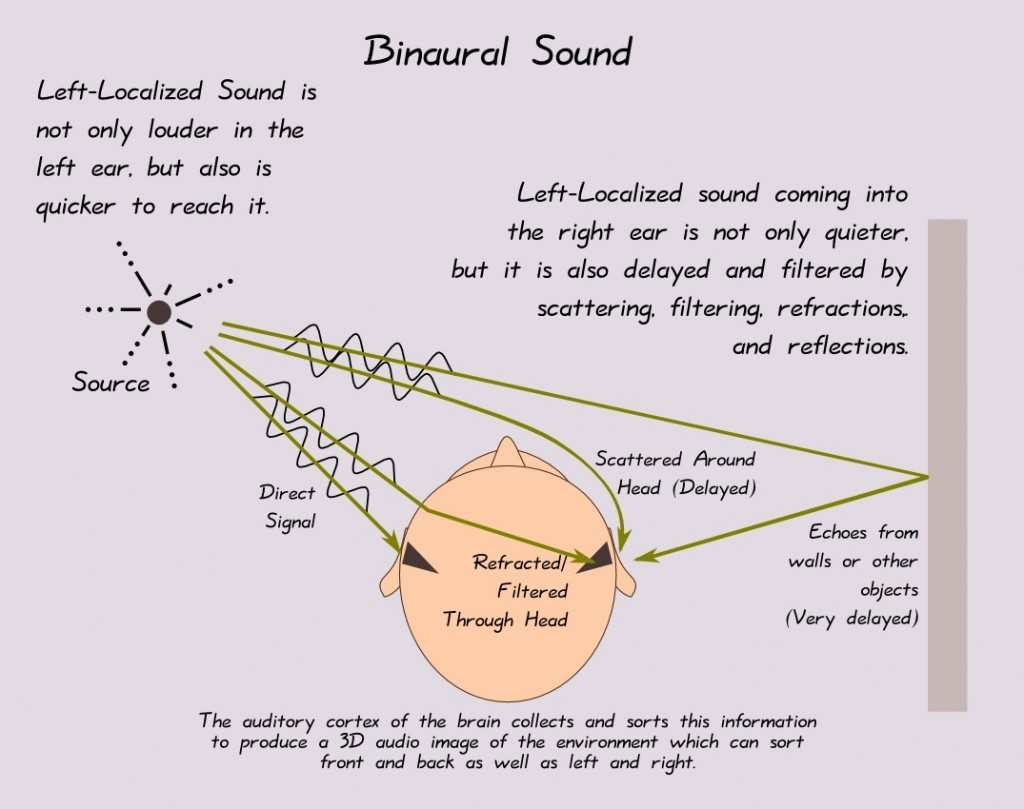

Humans locate sound sources in three-dimensional space based on two factors.

The first is the time differential between when sound waves arrive at the left and right ears – for instance, sound generated by a source located in front of you and to the left will reach your left ear slightly earlier than your right (it will sound slightly louder at the left ear as well).

The second is the way the sound is shaped by our inner/outer ear, head, and face anatomy. Two identical sound waves originating from two slightly different points in space will be modified differently by the pinna and ear canal on their way to the eardrum. How sound entering the ear from a specific direction is modified by the listener’s anatomy before it reaches the eardrum is characterized by the Head-Related Transfer Function (HRTF).

Binaural sound is a term for stereo audio recordings that utilize the above factors to relay an immersive, three-dimensional sound experience in a rather convincing way over headphones – just the sort of thing you need when using a VR setup.

Purpose-made binaural recordings are done with mics mounted inside the ears of a dummy head that is then immersed in the sound field being recorded. In order to correctly approximate the HRTF, the dummy should be a realistic mold of the average human head. In-ear microphones can also be worn by a performer or audience member, in effect replacing the dummy head with the wearer’s real head. There are even in-ear earphones with built-in binaural microphones on the market, like this rather inexpensive solution.

Obtaining true binaural recordings for VR applications – let’s say a movie of video game soundtrack – is highly impractical, but what if we could approximate the binaural recording by putting a virtual head in a virtual room? Let’s say this imaginary room has a 5.1 channel surround sound setup – most movies and many games already have 5.1 soundtracks. Using this 5.1 soundtrack and our virtual head in concert with an HRTF, we can approximate binaural sound in order to convey a 3D sound experience over regular stereo headphones.

Fraunhofer

Germany-based Fraunhofer are veterans of digital audio, having had a hand in the creation of mp3 and AAC audio codecs and countless other developments in the field. Their 3D audio technology is called Cingo and is a software solution that relies on tracking information from a head-mounted VR display – currently the target is the Samsung Gear VR.

Cingo also has loudness optimization and equalizing algorithms designed for optimal soundtrack playback even in noisy environments with smartphones and tablets (in fact, these aspects of Cingo are already used in Google Nexus devices in a limited capacity). I was more curious about its 3D audio components for VR applications, however.

Cingo also has loudness optimization and equalizing algorithms designed for optimal soundtrack playback even in noisy environments with smartphones and tablets (in fact, these aspects of Cingo are already used in Google Nexus devices in a limited capacity). I was more curious about its 3D audio components for VR applications, however.

Fraunhofer actually had three demos available to illustrate the potential of the Cingo 3D audio technology. The first utilized a 2-channel stereo soundtrack and simply illustrated the head-motion compensation of the software, using the sensors on the Gear VR to get positional info on the user’s head and applying it to the audio. The tracking worked well, but the overall experience still left something to be desired.

Panning 2-channel audio is just the first step towards 3D sound. It helps if the audio is not simply stereo in the first place, as the typical stereo audio recording lacks the positioning cues that are such an integral part of how we perceive sound in space.

Fraunhofer’s second demo utilized 5.1 surround audio, using virtualized sound sources and an experimentally-derived HRTF to turn it into a sort of pseudo-binaural recording for headphone playback, and then applying the motion tracking algorithm used in the 2-channel demo.

The third and final demo went one step further by using a 12-channel (11.1) “theater” mix as the original sound source. After all, 5.1 audio contains much less than full 3-dimensional positioning information. There isn’t even a pure left- or right- panned source in 5.1. The theater mix did a better job and sounded noticeably more convincing, especially during a movie chase sequence in the demo where sound sources panned and moved around quickly.

The third demo did very much remind me of a true binaural recording – but one that additionally pans around in response to head motion. Vertical positioning seemed to be the most challenging – the only demo that was able to create some semblance of it was a synthesized 3D sound demo, and even that was diminished somewhat when the visual cues were taken away (as we know, our eyes can have a major effect on how and what we hear. See, for instance, the McGurk Effect) . Nonetheless, it created an immersive and enjoyable sound presentation during the movie demo – certainly better than any other VR audio experience I’ve had so far.

3D Sound Labs

French startup 3D Sound Labs is taking another approach to developing 3D audio – one that is independent of existing VR devices. In contrast to Fraunhofer’s pure software solution, 3D Sound Labs’ product is the Neoh headphones and Neoh player, the corresponding audio/video app. The Neoh headphones have a built-in sensor that tracks head movements (in contrast to Fraunhofer’s solution, which relies on the motion sensors built into an existing VR headset). This means Neoh has wider potential applications, at least in theory (though it is currently limited to being compatible only with the Neoh player app, which is available only on iOS for the time being)

The Neoh headphone looks familiar – in fact, it seems to be using the same OEM shell as the Photive X-bass from my recent wireless headphone roundup. As a stereo headphone it sounds alright – not great, but passable.

3D Sound Labs of course uses the same idea of virtualizing multiple sound sources from the data stored on a 5.1 channel sound track, and using the head tracking sensor on the headphones to pan in real time with the user’s head movements. The sensor on the headphones is connected to the iPhone or iPad via Bluetooth. Unfortunately, the headphone itself still needs to be plugged in via wire for audio, so this is not yet the wire-free solution I wanted.

Here’s the rub – I couldn’t try the demo because the tracking sensor had a lot of trouble maintaining the Bluetooth connection at the show – not surprising considering the sheer number of various wireless signals at the biggest CE show on earth. Still, the thought process behind the headset is promising, and the company has set a tentative release for spring 2015, with a kickstarter to come first.

Conclusion

In addition the enormous entertainment potential in movies and various VR applications, personal 3D audio seems like an important part of the headphone equation moving forward. Running additional processing on audio isn’t usually considered hi-fi, but there’s no ignoring that functional virtual 3D would be the biggest step forward in “soundstaging” for headphone applications in a long, long time.

The current 3D audio implementations all have a ways to go – the technology is still very much nascent, and the market has yet to really take shape, but after spending time with the Fraunhofer demo at CES I’m very excited by the prospects. One thing I am sure of – the ideal 3D audio solution for VR will be wireless, synthesized from an existing mix without requiring binaural recordings, and will absolutely change the way we experience headphones.

4 Responses

Thank you, this is very cool – the demos are quite good!

Will have to try out the software when I have some downtime.

Great article.

I thought you might be interested in our virtual speaker/surround software, Out Of Your Head (http://fongaudio.com), that generates real-time binaural audio from 2 to 8 channel audio.

Essentially Out Of Your Head recreates the sound of speakers in a room very accurately. You not only get the localization of the sound coming from the virtual speakers in the room, but you can also tell what brand of speakers, the room acoustics, etc. Out Of Your Head is based on measurements of actual speakers and rooms. It is not synthesized.

Plus it works with any headphones and any DAC, etc.

You can listen to samples and/or download a free trial to hear it for yourself using your own gear.

Prerendered samples:

http://fongaudio.com/demo

Free trial download:

http://fongaudio.com/out-of-your-head-trial-download

Please contact me via our website if you have any questions,

-Darin Fong

Yes, you’re absolutely right. Until we have something like “virtual concert” experiences for VR, head tracking is more for video-based applications like movies, games, immersive tours, etc. I just found it fascinating how all these parts are coming together and approaching a practical solution.

There are some albums mastered in 5.1 and even 4-channel quadraphonic from the 70s, but not many. I don’t see a binaural sound processor that you can just feed these records to becoming household tech, but maybe as a standalone music playback app it could work.

The only binaural recordings I have are a few Chesky releases: http://www.chesky.com/genres/binaural . I highly recommend the headphone demo disc – it’s very cool 🙂

I’ve been intrigued by 3D audio for a while.

The research has always been on and evolving. Now, with the incredible computing power chucked out of the tiniest processors and wearable sensors on the rise, true mainstream 3D audio isn’t far off.

I see the benefits of motion tracking in creating a more immersive V-enviroment. But, I don’t think it’s desirable feature for music. Perhaps, we could just have a portable DAP that could do the pseudo-binaural processing? Well…the music would have to be recorded in 5.1 at the very least. Which I don’t see happening, as most of the music today is recorded separately and then mixed.

Do you know of any good albums recorded al la binaural?

Thanks for the engrossing read ljokerl.